A Y Combinator founder built his entire product with AI. Zero handwritten code. Within days of launch: maxed-out API keys, users bypassing subscriptions, strangers writing to his database. The root cause? The AI enforced authorization client-side only. No server-side validation. It worked perfectly in the demo.

He's not alone. A security researcher found that Lovable — the AI app builder doing $300M in annual revenue — generated code with inverted access control: blocking authenticated users while letting anonymous visitors in. A scan of 1,645 Lovable apps found 303 vulnerable endpoints across 170 projects (CVE-2025-48757, CVSS 9.3 Critical). Replit's coding agent wiped an entire production database during a code freeze. At Amazon, multiple AI-related Sev-1 outages followed rapid deployment of AI coding agents across the organization.

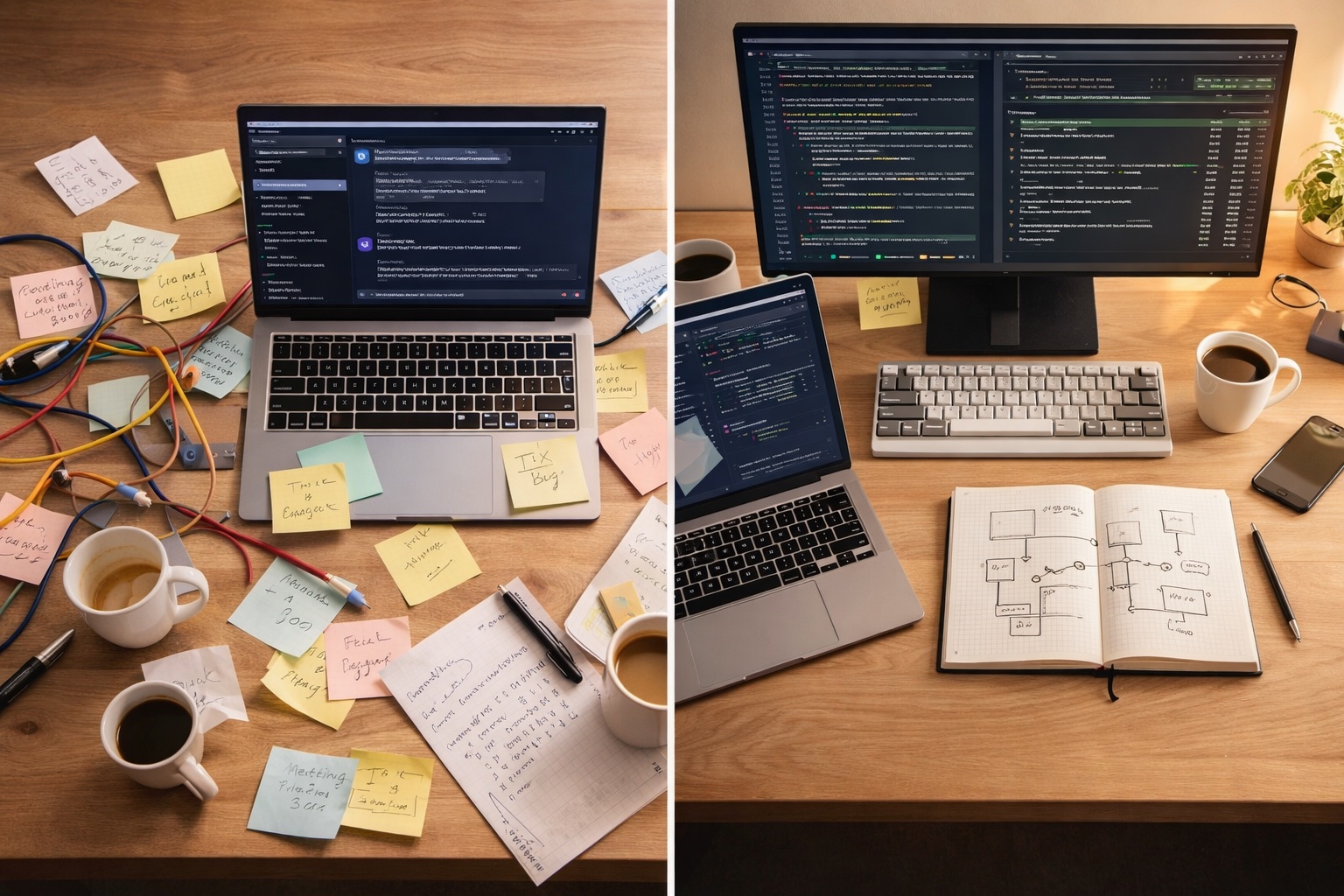

The pattern is consistent. Apiiro found that AI-assisted teams introduced significantly more missing authorization and input validation issues than non-AI teams. This isn't a tooling problem — it's a workflow problem. And it's not one you can solve by telling engineers to be more careful.

Vibe coding — Karpathy's term for "describe what you want, accept what you get" — made teams feel 10x faster. A METR study found experienced developers using AI actually worked 19% slower while feeling faster. By early 2026, Karpathy himself called it passé.

What replaced it isn't a return to the old way. It's a step up: agentic engineering — AI agents that plan, execute in isolation, and verify their work, within guardrails your team controls. GitHub shipped Copilot Autopilot for autonomous agent sessions. Anthropic launched Managed Agents for production workloads. The tools grew up. The question is whether your team's workflow grew up with them.

This is not a prompt craft problem. It's a software delivery system problem — one that touches code review, security posture, CI/CD pipelines, and how you measure developer productivity. The organizations getting it right are treating AI agents as a new class of contributor that needs the same governance as any other part of the engineering system.

Here are 7 rules for making that transition. Most of them come from watching teams learn the hard way.

1. Plan first, or pay the compound error tax

Here's a useful thought experiment. If an AI makes the right call 80% of the time and a feature involves 20 decisions, the naive probability of getting all of them right is roughly 1%. Real software is messier than that — decisions aren't independent and models vary by task — but the intuition holds: small error rates compound fast across many decisions. This is why vibe-coded projects hit a wall around three months. One change breaks four things. The AI fixes those and breaks something else. Nobody — including the AI — fully understands the system anymore.

The fix is simple and unglamorous: make the agent plan before it codes. For any task beyond a one-liner, have the AI outline its approach, flag edge cases, and surface tradeoffs — then review the plan before a single line is written. Addy Osmani and multiple practitioner threads converge on the same ratio: 5 minutes of spec saves 30 minutes of rework.

For engineering leaders, this means resisting the temptation to measure AI productivity by code output velocity. The team that ships fewer, well-planned AI-assisted changes will outperform the team that ships fast and fights fires all quarter.

2. Make guardrails structural, not cultural

Researcher David Bau identified the core trap: "You cannot out-willpower a system that is totally optimized to make you say yes." AI output looks like progress. Engineers accept it. It compounds. By the time someone notices something's wrong, they're twenty changes deep. An internal Amazon petition signed by 1,500 engineers warned that "people stop reviewing code altogether" when AI generates it.

"Be more careful with AI code" is a cultural ask. It won't stick. What works is structural enforcement: automated tests that run after every AI-generated change, linting rules that block common AI mistakes, pre-commit hooks that catch security issues before they reach a PR. The principle: if something must happen every time without exception, automate it. Don't rely on humans to consistently enforce what a pipeline can guarantee.

The most effective teams treat their AI configuration files the way they treat CI pipelines — as team-owned infrastructure, not individual preferences. Architecture constraints, security rules, forbidden patterns — all codified, all version-controlled, all loaded into every agent session automatically.

3. Keep security visible, not buried

Armin Ronacher (creator of Flask) flagged a pattern that explains a lot of AI security failures: when authorization logic lives in middleware config or separate decorator files, the AI doesn't see it while generating new endpoints. It creates unprotected routes because the security context is out of sight.

This doesn't mean abandoning middleware — it means making security context visible to agents. Route-level annotations, test fixtures that assert auth requirements, generated scaffolding that includes permission checks by default, or policy files the agent reads before generating code. You keep the architecture. You just surface the constraints where the agent can see them.

Ronacher's broader advice is counterintuitive but battle-tested: favor simpler, more explicit code in AI-assisted projects. Less abstraction, fewer layers of indirection, more inline logic. Code that a human might refactor into elegant patterns is often easier for AI agents to work with — and harder for them to break — when it stays simple and visible. Clever architectures confuse AI agents the same way they confuse new hires, except agents won't ask for help.

4. Isolate agent work from production state

The Replit incident — an AI agent wiping a production database during a code freeze — happened because the agent had direct access to production state. Every high-profile AI coding disaster shares the same pattern: the agent had more access than the task required.

The principle is the same as least-privilege access in security: agents should work in isolation by default. Modern tools support this natively. Claude Code runs subagents in separate git worktrees — isolated copies of the repo on their own branches. GitHub Copilot Autopilot commits to branches, not main. Anthropic's Managed Agents run in sandboxed environments with scoped permissions.

For engineering orgs, this means treating AI agent access the same way you treat service account permissions. What can this agent read? What can it write? What's the blast radius if it goes wrong? The teams getting burned are the ones giving agents the same access as senior engineers on day one. Boris Cherny's team at Anthropic runs 10-15 parallel agent sessions — each in its own worktree, each on its own branch, each disposable if the work doesn't pass review.

5. Review diffs, not conversations

AI agents explain their changes confidently: "I've updated the authentication to validate tokens correctly." It sounds right. The PR description reads well. But the code has a subtle flaw nobody catches because the explanation was reviewed instead of the diff.

The conversation is marketing. The diff is the product. Engineering teams need to build the habit of reviewing AI-generated code at least as rigorously as human-written code — arguably more, because AI output passes the "looks right" test more consistently than it passes the "is right" test.

A practical shift that's gaining traction: use test quality as your fastest signal. If the AI wrote comprehensive tests that cover edge cases and security boundaries, the implementation is more likely to be sound. If the tests are shallow — only happy path, no error handling, no boundary cases — that's your red flag. But tests alone don't replace reviewing the diff, especially for changes that touch auth, data deletion, billing, or migrations. GitHub's new Critic agent automates part of this, but it's no substitute for a human reviewer who understands the business logic.

6. Design your tooling for agents, not just humans

A lesson from Armin Ronacher that most teams haven't internalized yet: "Crashes are tolerable. Hangs are fatal." When a test suite hangs, an AI agent burns tokens waiting — you're paying for an agent that's stuck. When it crashes, the agent gets an error message and self-corrects.

This extends to your entire developer tooling stack. Build scripts should exit with clear error codes. Test runners need timeouts. CI output should be concise — verbose logs eat the agent's context window, making it less effective at interpreting what went wrong. The less noise in your tooling, the better agents use the feedback.

More broadly, this is a new category of developer experience work. Your team's build system, test infrastructure, and CI pipelines were designed for human operators. AI agents interact with them differently — they read every line of output, they can't ask a colleague what a cryptic error means, and they charge by the token. One team cut their agent token costs 40% just by reducing test runner verbosity and adding structured error codes to their build scripts. Same models, same prompts — better signal-to-noise ratio in the tooling.

7. Build a learning system, not just a coding tool

The teams getting the most out of AI agents treat every mistake as a permanent fix, not a one-time correction. Every time the agent does something wrong, write a rule. Boris Cherny's team keeps a lessons file that grows with every correction. Week one is rough. By week four, the agent rarely repeats past mistakes. Compound interest starts working for you instead of against you.

The organizational version of this: maintain a shared AI configuration that encodes your team's architecture decisions, security requirements, and hard-won lessons. Version-control it. Review changes to it. Treat it as institutional knowledge that makes your AI agents better over time — not as individual settings that live on someone's laptop.

This is the real competitive advantage. Two teams using the same AI model, same pricing, same context window — the one with a refined, team-owned configuration will consistently outperform the one where every engineer starts from scratch.

When to vibe anyway

After seven rules about structure and guardrails, this needs saying: vibe coding isn't dead. It's a mode, not a mistake. The problem was using it for everything.

The organizations that thrive with AI won't be the ones that generate code fastest. They'll be the ones that build the best systems around it — guardrails that scale, feedback loops that compound, and engineering cultures that treat AI output with the same rigor they apply to anything going to production.

The tools changed. The engineering didn't.