If you've ever used a note-taking app seriously — Obsidian, Notion, Apple Notes, whatever — you know the feeling. Hundreds of notes, all saved, all searchable in theory. And you can barely find anything when you actually need it. We got good at saving stuff. Terrible at finding it again. Your second brain has amnesia.

The obvious fix is to point an LLM at it. In 2026, three very different approaches are trying to solve this — Obsidian plugins, Claude Code's AutoMemory, and Andrej Karpathy's LLM-compiled wiki. Here's what works, what doesn't, and practical tips for each.

There are dozens of AI plugins for Obsidian now — chat with your notes, find related notes, local-only privacy options, inline copilots. The ecosystem is fragmented (every plugin needs its own API key and stores data differently), but some things genuinely work.

Where it actually works

Despite the mess, some things genuinely work:

- Summarizing research. Point the LLM at a group of notes on a topic and ask for a summary. Works well because the source material is right there — the AI doesn't need to guess.

- Finding forgotten connections. Meaning-based search finds notes that keyword search never would. One user had the AI connect a meeting note from 2024 with a research paper saved six months later — a link they'd never have spotted themselves.

- Spotting patterns. Ask the LLM to look at your reading highlights and it picks up themes you didn't notice. Topics you keep coming back to. Questions you circle around without answering.

- Auto-tagging. LLMs can tag notes with more detail and consistency than most humans bother with. Great for vaults that grew without any tagging system.

Where it falls apart

The problems are real, and some of them are hard to fix.

RAG on personal notes is hard

RAG was built for clean, well-structured documents. Personal vaults are the opposite — shorthand, messy formatting, abbreviations that only make sense if you remember what happened that day. The splitting strategies that work on docs break on daily journals. The AI pulls back fragments that look relevant but miss the point.

Hallucination over personal data is uniquely bad

When an LLM makes up a fact about the French Revolution, you can look it up. When it makes up a meeting that didn't happen, or puts the wrong words in someone's mouth from your own notes, it's different. You might actually believe it. Trust breaks fast when the AI is confidently wrong about your own life.

The best fix I've found: make the AI quote the source note directly in every answer. If it can't quote it, it shouldn't claim the info exists.

The privacy tension has no easy answer

Your Obsidian vault is probably the most personal data you have — more personal than email, because it's your raw, unfiltered thinking. Sending that to cloud APIs is a non-starter for many people. Running local models through Ollama keeps everything private, but the quality gap is real. Local models are slower, less smart, and struggle with the kind of nuance that personal notes need.

Context windows still aren't enough

Even with 200k+ token windows, a real vault won't fit. Two thousand notes at 500 words each is about 1.5 million tokens. So you need RAG, and RAG adds noise. You're always working with an incomplete slice of what you know.

The MCP shift: from chat to agents

The bigger shift in 2026 isn't chat plugins — it's MCP. The Model Context Protocol, built by Anthropic, gives AI agents a standard way to connect to tools and data sources. Several MCP servers for Obsidian have appeared, and they change what's possible: instead of just answering questions about your notes, an MCP agent can create notes, update tags, and build connections. The AI goes from search box to collaborator.

One trade-off: MCP tools eat context. Every tool definition takes up tokens, leaving less room for your notes and conversation. For simple tasks, lightweight skills are more efficient — MCP shines when the agent needs real-time read/write access to your vault.

Claude Code's answer: AutoMemory and Auto-Dream

While the Obsidian community was adding AI to existing notes, Anthropic went the other way. They built notes into the AI itself.

AutoMemory, shipped in Claude Code v2.1.59, automatically saves knowledge across sessions. You don't write anything. Claude decides what's worth remembering — build commands that worked, debugging insights, architecture decisions, code style preferences, workflow patterns — and writes it to plain markdown files in ~/.claude/projects/.

# Example: what AutoMemory captures automatically

~/.claude/projects/my-app/memory/

MEMORY.md # Index file, loaded at session start

debugging.md # Patterns learned from past debugging sessions

api-conventions.md # Style preferences inferred from your code

architecture.md # Decisions and rationale from conversations

At the start of each session, Claude loads the first 200 lines of MEMORY.md into context. Detailed topic files are read on demand. The memory is machine-local, stored as plain markdown, and not synced to Anthropic's servers.

Then came Auto-Dream.

Auto-Dream is a background agent that cleans up memory files between sessions. It reads everything, finds contradictions, turns relative dates into real ones (so "yesterday" still means something next month), removes notes about deleted files, and merges duplicates. Think of it as REM sleep for AI — the cleanup your brain does while you're not using it.

Important safety detail: Auto-Dream can only touch memory files. It can read your code but never change it. It cleans up what the AI remembers, not what you've built.

As of April 2026, Auto-Dream is partially rolled out. The /memory menu references a /dream command, but it's gated by a server-side feature flag and returns "Unknown skill" for most users. The feature is clearly coming — Anthropic just hasn't flipped the switch for everyone yet.

The Karpathy approach: let the LLM write the whole wiki

Then there's the third approach — the most radical one. Andrej Karpathy recently shared his workflow, and it flips everything: the LLM doesn't search your notes. The LLM writes all the notes. You barely touch the wiki yourself.

The setup: dump raw sources (articles, papers, repos, images) into a raw/ folder. Then have an LLM "compile" a wiki — markdown files with summaries, backlinks, concept articles, and cross-links. Obsidian is just the viewer. The LLM does all the writing and organizing.

The part that surprised me most:

I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

At ~100 articles and ~400K words, well-organized markdown with clean index files worked better than the RAG system he thought he'd need. The LLM keeps its own table of contents, so it knows where to look without vector search.

What makes it self-improving: answers go back into the wiki. Ask a question, get a markdown file with the answer, save it alongside everything else. Your research keeps building on itself. He also runs LLM "health checks" — scans that catch mistakes, fill in gaps, and suggest new articles to write. Basically Auto-Dream, but done by hand in an Obsidian vault.

Obsidian's creator kepano added an important point: keep your personal vault separate from your agent vault. Let the AI make a mess in its own space. Only move stuff into your main vault once it's proven useful. Otherwise you end up with ideas you can't source — you won't know what's yours and what the AI made up.

Three approaches, same problem

Step back and the convergence is striking. Three different approaches to the same problem, each with a different answer to "who does the work?"

Obsidian's AI plugins struggle with messy notes and can't know what's in your head. Karpathy's wiki needs you to feed it sources and run cleanup passes — but it gets better over time on its own. Claude's AutoMemory needs zero effort but only knows what happens in your coding sessions. Each one trades control for convenience in a different way.

The real question isn't which tool to pick. It's how much control you want to keep versus how much you're willing to hand to the AI.

Making memory actually work: practical tips

Enough theory. Here's what actually makes a difference.

For Claude Code and AI coding agents

- Keep CLAUDE.md under 200 lines. It gets loaded as a regular message, not a system prompt. Long files lose weight as the conversation grows. Put topic-specific rules in

.claude/rules/withpaths:frontmatter — they only load when Claude opens matching files. Way more efficient. - MEMORY.md has a 200 line / 25KB cap. Anything past that never gets loaded at the start. Claude puts details in topic files like

debugging.mdand reads them when needed. If your MEMORY.md is too big, just edit it — it's a regular markdown file. - Be specific, not vague. "Use 2-space indentation" works. "Write clean code" gets ignored. The more concrete the rule, the more reliably the agent follows it.

- CLAUDE.md is for your rules. Auto memory is for Claude's observations. They work together but serve different jobs. CLAUDE.md holds standards you set. Auto memory holds things Claude figured out on its own. Don't put the same stuff in both.

- Say "remember this" when it matters. Claude doesn't save things to memory as often as you'd think. When you make a big decision, tell it explicitly. Or put in your CLAUDE.md: "When we make an architecture decision, save it to memory."

- Ask for a handoff summary before ending long sessions. Have Claude write down what was done, what was decided, and what's next. More reliable than hoping auto memory got it all, and gives the next session a clear starting point.

- Clean up memory every ~20 sessions. Run

/memory, open the folder, delete the stale stuff. Old memories pile up with dead file references and dates like "yesterday" that mean nothing now. Fix or remove them. - Use CLAUDE.local.md for your personal stuff. Sandbox URLs, test data, shortcuts — put them in

CLAUDE.local.md(gitignored). It loads after CLAUDE.md, so your personal settings win without messing up the team config.

For Obsidian vaults with AI

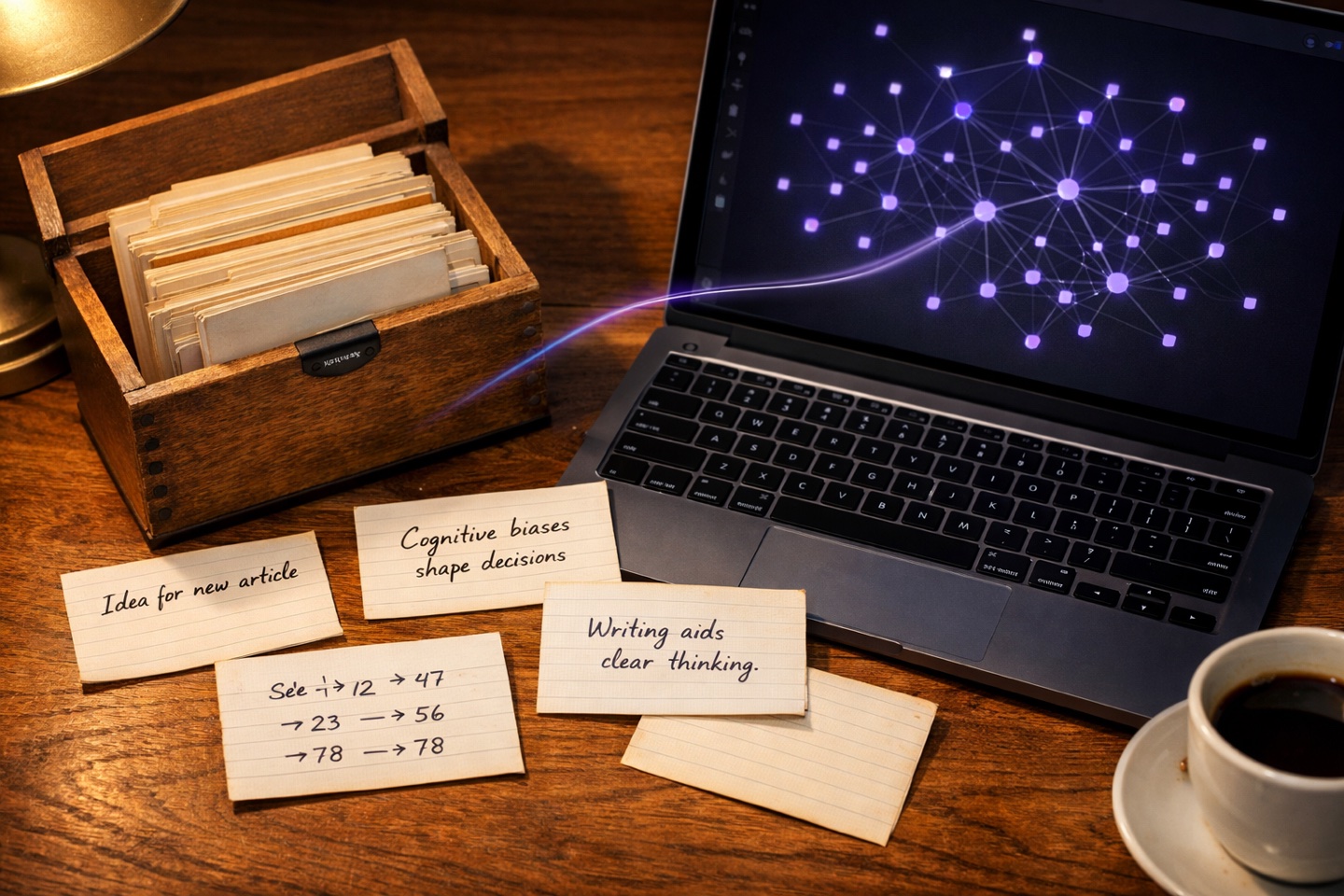

Quick background: there's a note-taking method called Zettelkasten — German for "slip box." Imagine a shoebox full of index cards. Each card has one idea on it. On the back, you write which other cards it connects to. That's the whole system. A sociologist named Niklas Luhmann used it to write 70+ books. The trick: his notes talked to each other. Obsidian users do the same thing with markdown files and [[wiki links]]. It turns out this approach — short notes, one idea each, linked together — works really well with AI search too.

The Zettelkasten method: index cards with one idea each, linked by hand — now done with markdown and wiki links

- Start every note with a clear statement. Not "Notes on X" but "X works because Y." AI search tools weigh the first sentence heavily. A good opener is its own summary.

- Aim for 200–800 words per note. Too short and there's not enough context. Too long and the good stuff gets buried. If a note passes ~1,000 words, split it — but add a sentence or two at the top of each part so it makes sense on its own.

- Add a

summaryfield in frontmatter. Frontmatter is the block of metadata at the top of a markdown file, wrapped in---lines. Obsidian uses it to store tags, dates, and custom fields. Adding a one or two sentencesummarythere gives AI search a strong signal — especially for messy notes where the body text is all shorthand. - Write sentences, not just bullets. Bullet-heavy notes don't search well — each bullet has too little context on its own. Full sentences work much better for AI retrieval. Save bullets for actual lists.

- Tell the AI to stay in your vault. When asking questions, say "Use only my notes, not your general knowledge. If my notes don't cover this, tell me." Without this, LLMs quietly fill gaps with stuff from training data, and you can't tell what's yours.

- Make the AI quote its sources. If it can't quote the specific note, it shouldn't claim the info exists. This one rule kills most hallucination risk.

- Clean up as you go: tag-on-touch. Don't try to reorganize everything at once. Just spend 30 seconds adding frontmatter and a summary every time you open a note. After a few weeks, the notes you actually use are clean. The rest doesn't matter.

- Have AI write summary notes for you. Ask the LLM to read a group of related notes and write a single overview. These summaries become great search targets — better than any one source note — and they surface connections you missed.

One thing worth keeping in mind across all of this: a post on the Obsidian forum puts it well — your vault works best as a thinking space, not a database. Use AI to find, summarize, and organize. But the part where you connect ideas and form opinions? That's yours. If you start writing notes for the AI instead of for yourself, you're optimizing for the wrong thing.

Three approaches, one problem. Obsidian users are adding AI to their notes from the bottom up. Karpathy is handing the whole wiki to the LLM. Anthropic is building it in from the top down. All three use markdown files. All three keep your data local. And all three are still figuring out the same thing: what's worth remembering, and when should you forget?

Your second brain still has amnesia. But the treatment options are getting better fast.